Doomvertising

AI companies want to scare you, an infinite haystack, and supply chain hacking (dumb)

Hello, welcome to the latest issue of Endnotes! I don't know what's going on, and I don't know how to know what's going on, but I promise to get to the bottom of it. Please join me on my epistemological journey. If you need to contact me, you can reach me on Mastodon.

This week:

Let's get to it.

They want to scare you

I was thinking about writing something about the Palantir super villain manifesto, but it's starting to feel like a bit. Really? You named your company after an evil device that makes everyone who gazes at it go insane, and now your crazy CEO sounds like if Ayn Rand fucked Bane? Of course they know they look like cartoon fascists. What I am kind of interested in, though, is why they might do this.

If you step back for a second, there's a pattern to how AI companies market themselves. Let's coin a cool neologism that no one will ever use again, how about "doomvertising." You generate buzz for your boring SaaS thing or expensive toy by making everyone afraid of it. We all know negative emotions produce more sticky engagement with social media algorithms, and these companies are riding that on purpose.

OpenAI does this constantly with its new models, leaking to the media that the CEO is afraid of it, or it's too dangerous to release to the public. The media lap it up and put it in the headline, then it gets shared all over social media, then the new model is released and it turns out to be fine, normal, perhaps even bad.

Anthropic is doing the same thing with its "Mythos" marketing: make a big, scary announcement about how your new model will End Computing As We Know It, the tech-illiterate press covers it breathlessly, there are meetings at the White House, but then the actual computer scientists and security researchers weigh in and the conclusion is: meh.

The weirdest example of this is probably Friend AI. A Harvard dropout kid convinced someone to give him a bunch of money for his AI pendant startup, and apparently spent a lot of it on marketing in the New York City Subway. Last year, the company blanketed the walls and trains with messages like "friend: Someone who listens, responds, and supports you," "I'll never leave dirty dishes in the sink," and "I'll binge the entire series with you."

Dark stuff. Of course, people were furious and defaced the ads, posting the results to Instagram. Suddenly, everyone was talking about Friend AI, which, frankly, the founder kid seemed pretty fuckin' smug about!

I don't think he planned for his ad campaign to be rage bait, but he definitely recognized the dynamic once it started and leaned into it. They rolled out the same campaign in other cities, with the same result. A few months later, for the company's next act, they commissioned a series of short "user interviews," which are among the most harrowing things I've ever watched. For that reason, I actually shared this one the first time I saw it. He got me, I took the rage bait:

I have since become convinced they are fake. This one is just absurd. Come on, you expect me to believe these people are real, and would let themselves be filmed like this? At this point, I think Friend AI is deliberately trolling, as a marketing strategy. They want us to watch their thing, feel doom, and then share their brand with our networks. Doom scrolling. Doom sharing. Doomvertising.

To be clear, I don't think this is just marketing. Dario Amodei genuinely believes Claude has a soul and they are coding God! Avi Schiffmann thinks real people are boring and everyone would be happier talking to an LLM instead! Alex Karp wants to end democracy and replace the liberal, humanist, pluralist project with some combination of Gattaca and Equilibrium.

But they've tried standard capitalist-consumer tech marketing schlock and it doesn't work because normal people fucking hate AI. So they get our attention with doom, the kind of doom whose message is, "This technology is powerful, it will change everything, and it is inevitable. We are inevitable. Be afraid."

Infinite haystacks

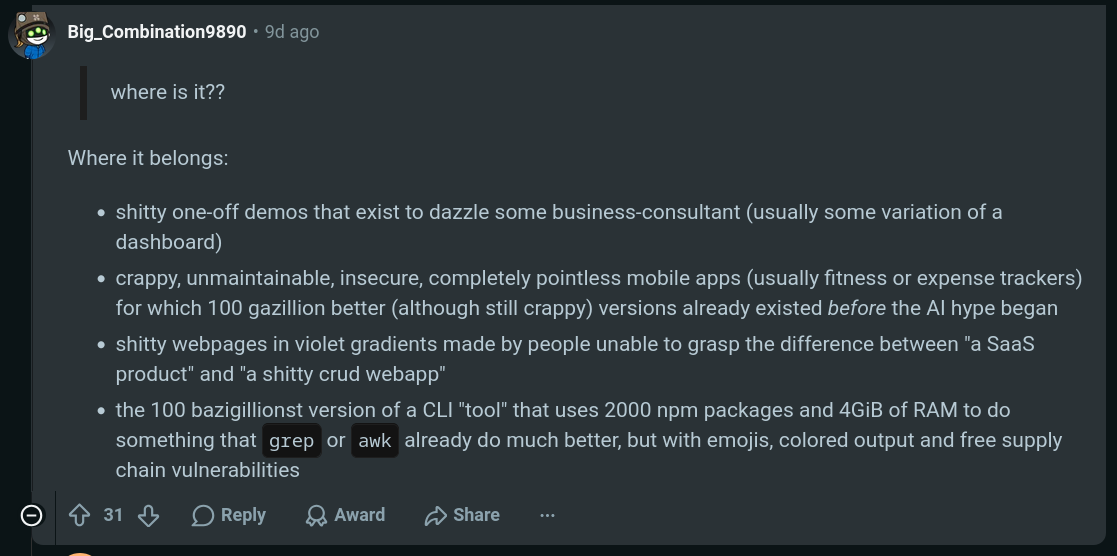

Someone posted a great question on the /r/BetterOffline subreddit: If anyone can code now, where is all the new software? I think this comment sums it up pretty nicely.

You haven't noticed all the new software because it's mostly crap that isn't making anyone's life easier or better. It's just more stuff. And there is more of it.

In the first quarter of 2026, Apple's app store saw an 84% increase in the number of new apps compared to the same time last year, so people are definitely shipping more software. But is more better?

Think of it this way: The task of any search feature is to help you find a needle in a haystack. You need to find a good fitness tracking app. That's the needle. There are millions of apps. That's the haystack. The search bar in the app store helps you sort through that haystack to find an app that meets your needs.

But when anyone can code up something that looks like an app and put it on the app store, it makes the haystack infinitely larger. Finding the needle becomes impossible, and the whole ecosystem degrades.

This goes for apps, but it also goes for webpages, books, academic articles, news articles, poetry, song lyrics, essays, how-to guides, instruction manuals, and anything where you might have a large archive of material you need to search to find what you need. The real danger of generative AI is that it can overwhelm our archival systems, flooding the information ecosystem with so much slop that the good information can never be found.

Supply chain blues

This one is a little bit in the weeds, but I think it's an important example of how we are living in Dumb Neuromancer. Here's a great blogpost summarizing what happened, and here's the full report on the incident from Trend Micro.

The story: Someone working for Context, one of the hungry "automate your work" AI startups, wanted to cheat at Roblox (lol). So they downloaded a cheat that contained malware onto a PC that had access to their work credentials.

Oops!

The malware stole the person's credentials, and with those credentials, the attackers were able to access Context's systems. Like lots of AI apps, this startup's app required users to provide credentials so it could access their e-mail and other personal accounts to do personal assistant-type stuff. Someone who works for a company called Vercel was using the app, and the attackers were able to steal that person's credential and use it to enter Vercel's systems.

Oh no!

Now this is extra bad, because Vercel is a platform that hosts apps people have made. Literally hundreds of thousands of apps. And it turns out Vercel has a security problem, which is that it left exposed certain app environment variables that were set as "not sensitive," making them easy to steal. Some of them were things like API keys.

Yikes!

So the attackers were able to steal valuable data. Fortunately, when they tried to sell some of it on the dark web, it tripped alarms at OpenAI, which cancelled the API keys and alerted users. But it took nine days for Vercel to inform its users that its platform had been pwned.

So to sum up: Someone trying to cheat at a video game caused a breach at a random AI startup that cascaded all the way up to a major hosting platform.

IT systems have always been vulnerable to unauthorized access. Cybersecurity folks will tell you: It is a never ending battle. But with the rise of vibe coding and a generation of software developers who came up relying on frameworks, libraries, OAuth, third-party hosting, and cloud, this incident feels emblematic of how quickly one person's stupid mistake can now daisy-chain very quickly into the compromise of an entire ecosystem.

News links

- One of my favorite podcasts, Kill the Computer, just released a very unique website. Check it out, it's fun! There's games and stuff.

- Someone might be killing American "nuclear and space defense" scientists. Eleven members of the small community have died or disappeared since 2022, under similar circumstances.

- Microsoft is set to switch to token-based billing and rate limiting for its GitHub Copilot AI thingy.

- A county board in Minnesota has blocked construction of a $4 billion data center by refusing to approve a zoning variance.

- Here's a large and growing list of AI fails.

- The (alleged!) incel mass shooter at FSU last year had long, explicit conversations with ChatGPT about suicide, mass shootings, and stalking an under-age girl.

- SpaceX has entered into some kind of weird almost-deal to buy Cursor for $60 billion, or give it $10 billion for not buying it, or partner on model training? It's not clear.